Rendering to Varjo headsets

This page gives an overview of the current Varjo API and explains the different features.

Table of Contents

Introduction

In its simplest form, rendering for Varjo devices consists of the following steps:

- Choose the view configuration

- Create render targets (swap chains)

- Wait for the optimal time to start rendering

- Acquire target swapchain and render to it

- Release swap chain

- Submit the rendered contents as a single layer

Current supported rendering APIs are DirectX 11, DirectX 12, Vulkan and OpenGL.

Concepts

Varjo API has some important concepts for frame rendering:

- Views, a viewport to scene such left context view or right focus view

- Swap chains, which represent the images that can be submitted to Varjo compositor

- Layer, contains information of a single sheet of the final image. Multiple layers can submitted to Varjo API and they will be combined as the final image.

Views

Varjo devices have two different view types: context and focus. Context is used to cover the whole field of view and focus is used to cover a smaller, more precise area.

Optimally 4 views are used. Views are always handled in the same order: [LEFT_CONTEXT, RIGHT_CONTEXT, LEFT_FOCUS, RIGHT_FOCUS]. It is possible to use only two views as well when the order is: [LEFT, RIGHT]. Other Varjo APIs that accept views as parameters assume that the views are given in the same order.

Technical specifications

For technical specifications, see Varjo products technical specifications

XR-4 Series

Varjo XR-4 has 2 displays but it is still recommended to render 4 views. Due to how the optics function in the HMD, the observable PPD (pixels per degree) is not uniform across the view. This means there is a higher PPD at the center of the view and the PPD drops towards the edges. Therefore it is possible to get better performance by rendering 2 views per eye: 1 for the high PPD area and 1 for the lower PPD area.

Views can be rendered as static which means the high PPD area will be at the center. The quality and the performance can be increased even further with foveated rendering which allows the high PPD area to move along the gaze.

View configurations

The view configurations from most optimal to least optimal are listed in the table below. Less optimal configurations can be used as fallback configurations. These configurations are applicable for all Varjo devices.

| Configuration | Views | Description |

|---|---|---|

| Foveation: dynamic projection | 4 | Focus view is rendered along the gaze |

| Quad | 4 | Used to achieve maximum PPD over the field of view |

| Foveation: variable rate shading | 2 | No focus view, context view shading rate varies along the gaze |

| Stereo | 2 | No focus view or foveated rendering |

Swap chains

A swap chain is a list of textures that is used for rendering. For each frame, the swap chain has an active texture into which the application must render.

Only swap chain textures can be submitted through Varjo API. The API does not support submission of other textures. If the application cannot render directly into a swap chain texture, it must copy the contents over to the swap chain for frame submission.

A swap chain can be created with varjo_*CreateSwapchain(). A separate variant exists for each supported API. The important configuration is passed in the varjo_SwapchainConfig2 structure:

struct varjo_SwapChainConfig2 {

varjo_TextureFormat textureFormat;

int32_t numberOfTextures;

int32_t textureWidth;

int32_t textureHeight;

int32_t textureArraySize;

};

textureFormat: The format of the swap chain. The table below documents the formats for swap chains which are always supported regardless of the graphics API.varjo_GetSupportedTextureFormats()can be used to query additional supported formats. All possible values can be found inVarjo_types.h. The most common format isvarjo_TextureFormat_R8G8B8A8_SRGBfor color swap chains.numberOfTextures: Defines how many textures are created for the swap chain. The recommended value is3. This will allow application to render optimally and not be blocked by Varjo compositor.- The last three parameters (

textureWidth,textureHeight, andtextureArraySize) depend on the way the application wants to organize the swap chain data. This is discussed in the next sections in more detail.

Swap chain formats that are always supported:

varjo_TextureFormat_R8G8B8A8_SRGBvarjo_DepthTextureFormat_D32_FLOATvarjo_DepthTextureFormat_D24_UNORM_S8_UINTvarjo_DepthTextureFormat_D32_FLOAT_S8_UINT

Note that the user can change the desired texture size from Varjo Base. The application should react to that, typically by recreating the swapchain (or request a restart of the application). The texture size change is notified with an event called varjo_EventType_TextureSizeChange.

Data organization

There are three different ways to organize swap chain data

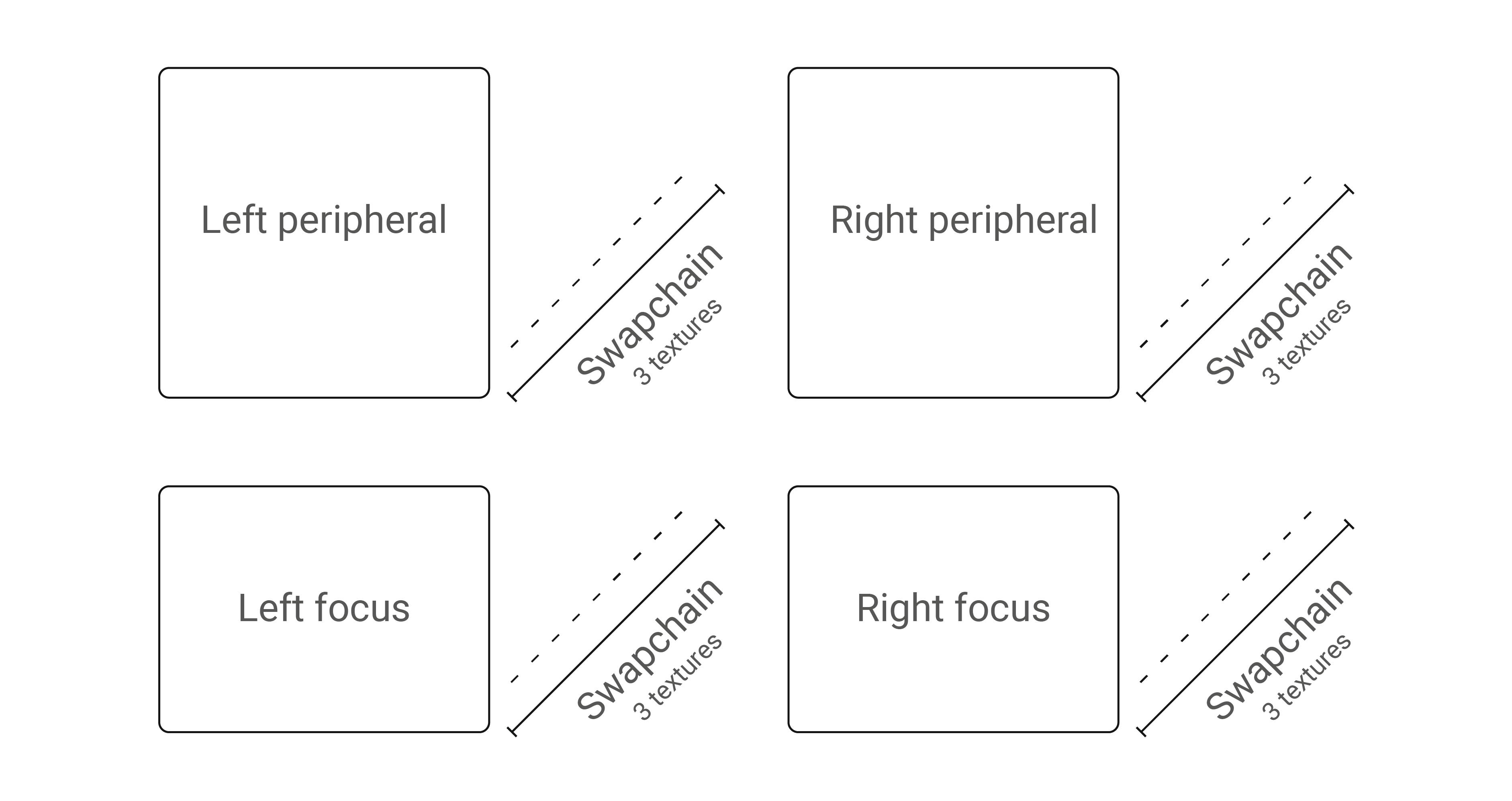

- Multiple swap chains: One per view

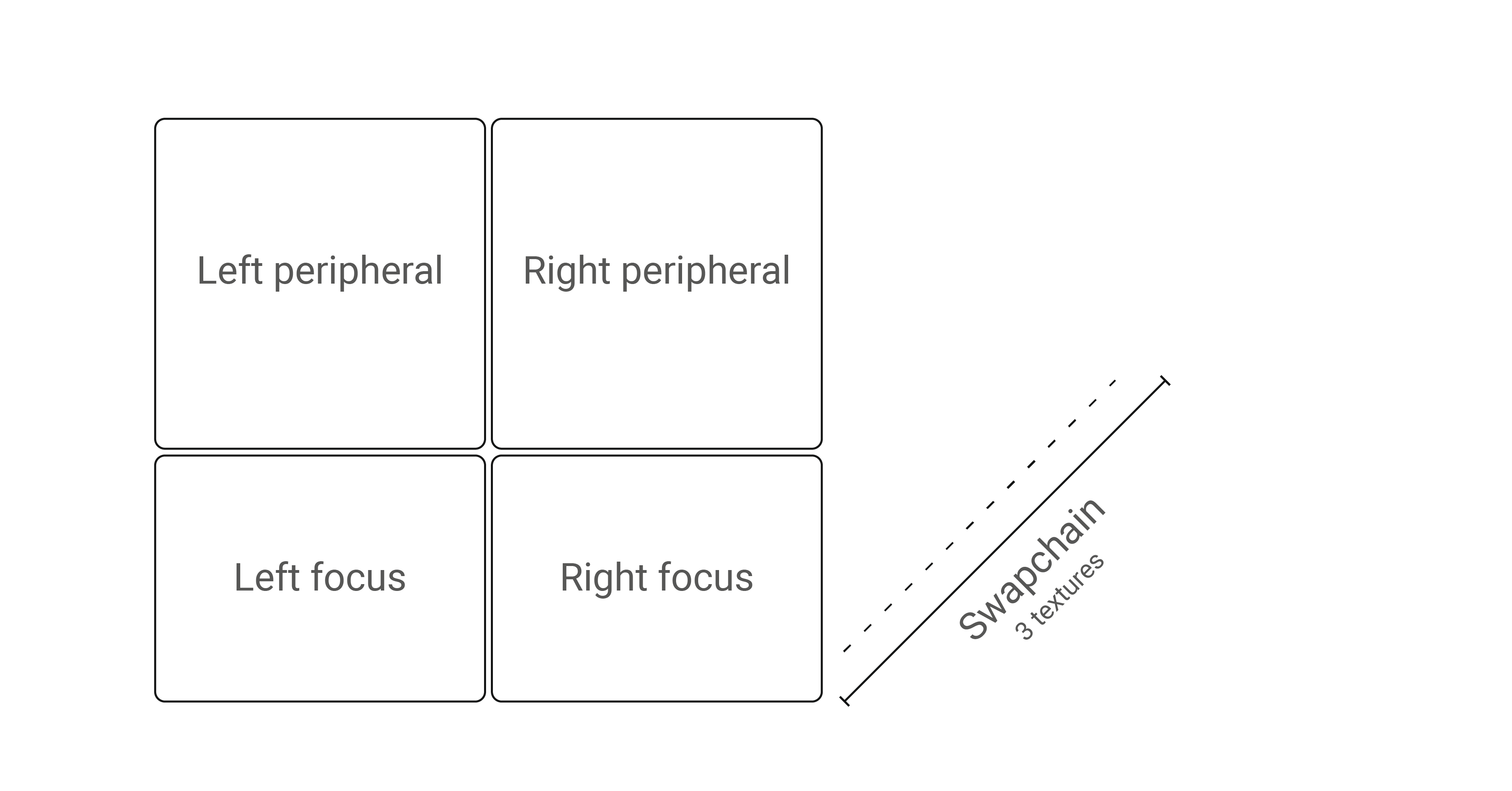

- Texture atlas: All views are rendered into one big texture

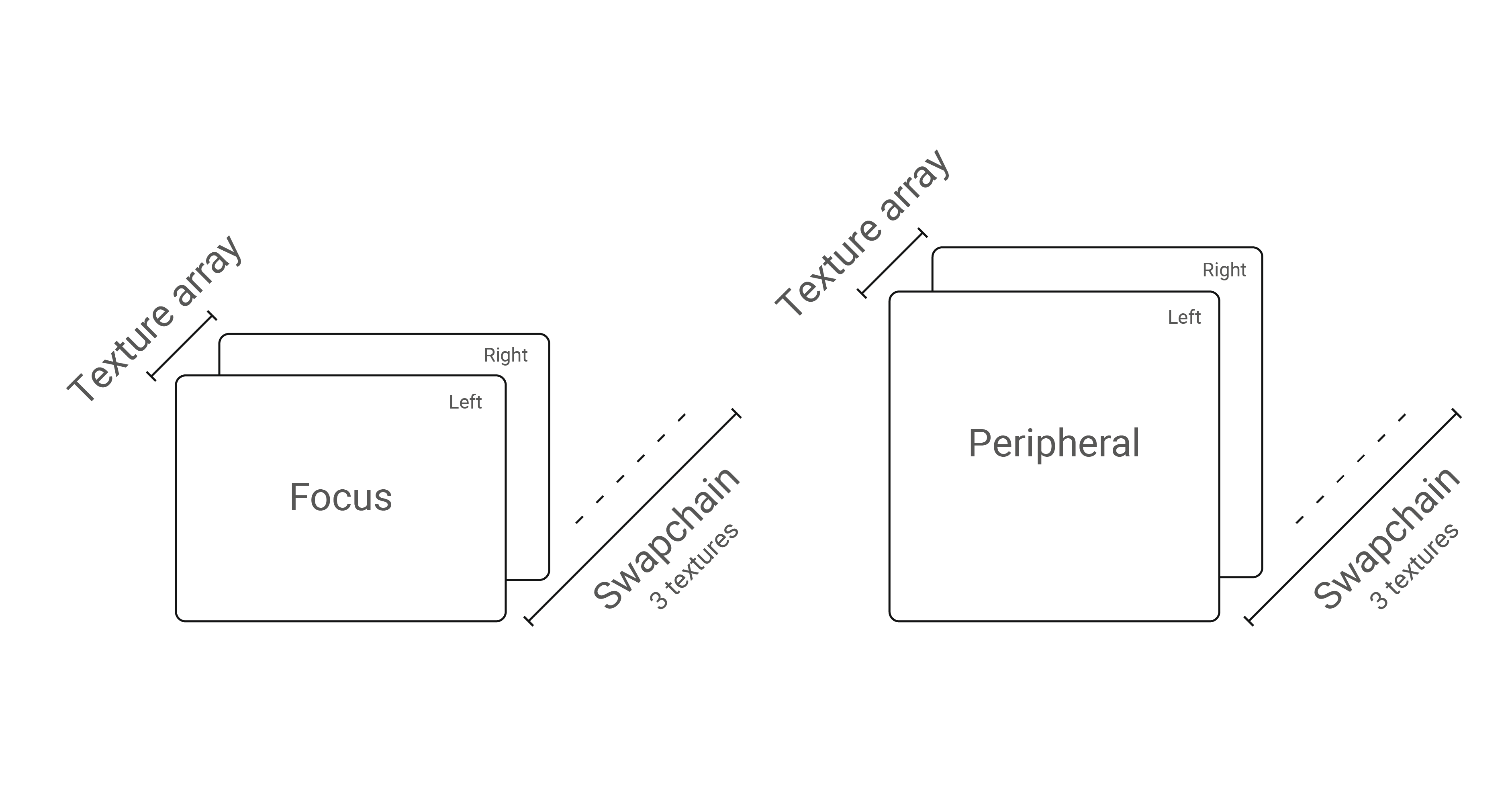

- Texture array: Two swap chains, where each swap chain has two views in a texture array

Additionally, any part of the texture can be picked as a data source with a viewport rectangle and texture array index. For example, a texture atlas may have the rendered views in any order.

Multiple swap chains

Each view has one swap chain.

Texture atlas

All views rendered into a single swap chain. To identify each view in the atlas, varjo_SwapChainViewport must be used with correct coordinates and extents.

Texture array

Varjo API also supports texture arrays. If textureArraySize > 1 is passed in the swap chain configuration, each swap chain texture becomes an array of the given size. Usually value textureArraySize = 2 is used if arrays are used at all. In some cases, this enables optimizations available for rendering stereo pairs.

Layers

A layer is a data structure that contains information of a single sheet of the final image. The final image is composited by Varjo Compositor from all the layers submitted by all active client applications, including the Varjo system apps.

Layer header

To support multiple layer types in the Varjo API, each layer struct (regardless of the type) starts with varjo_LayerHeader:

struct varjo_LayerHeader {

varjo_LayerType type;

varjo_LayerFlags flags;

};

In this structure, type identifies the layer and is always dictated by the actual layer type.

flags can be used to modify the layer behavior in the compositing phase, meaning functionality such as alpha blending. By default, the value for this is varjo_LayerFlagNone, which means that the application submits a simple opaque color layer.

The following options are available:

varjo_LayerFlag_BlendMode_AlphaBlend: Enable (premultiplied) alpha blending for the layer. Applications must supply a valid alpha. Premultiplied blending means that the compositor assumes the application has already multiplied the given alpha into the color (RGB) values.varjo_LayerFlag_DepthTesting: Enable depth testing against the other layers. Layers are always rendered in the layer order. However, when this flag is given, the compositor will only render fragments for which the depth is closer to the camera than the previously rendered layers.varjo_LayerFlag_InvertAlpha: When given, the alpha channel is inverted after sampling.varjo_LayerFlag_UsingOcclusionMesh: Must be given if the application has rendered the occlusion mesh to enable certain optimizations in the compositor. See below how to render the occlusion mesh.varjo_LayerFlag_ChromaKeyMasking: The layer is masked by video see-through chroma key if the flag is given.varjo_LayerFlag_Foveated: Indicate to compositor that the layer was rendered with foveation.

See Varjo_types_layers.h for more information.

Projection layer

The only provided layer type at the moment is multi-projection layer. Each layer of this type contains a number of views tied to a certain reference space.

The varjo_LayerMultiProj describe these in a single struct:

struct varjo_LayerMultiProj {

struct varjo_LayerHeader header;

varjo_Space space;

int32_t viewCount;

struct varjo_LayerMultiProjView* views;

};

varjo_LayerMultiProjView describes the parameters for a single view:

struct varjo_LayerMultiProjView {

struct varjo_ViewExtension* extension;

struct varjo_Matrix projection;

struct varjo_Matrix view;

struct varjo_SwapChainViewport viewport;

};

The application should query the recommended texture sizes with varjo_GetTextureSize. varjo_GetTextureSize is affected by the resolution quality setting of Varjo Base. Varjo compositor also supports submitting a stereo pair. For stereo rendering varjo_LayerMultiProj::views should contain just two context views.

Rendering step-by-step

The rendering flow for Varjo HMDs can be described as follows:

- Initialization

- Initialize the Varjo system with

varjo_SessionInit. - Decide what kind of view configuration is suitable for your application.

- Set up the viewports using the info returned by

varjo_GetTextureSize. - Create as many swap chains as needed via

varjo_D3D11CreateSwapChain,varjo_D3D12CreateSwapchain,varjo_GLCreateSwapChainorvarjo_VKCreateSwapChain. For the format, use one that is always supported, or query support withvarjo_GetSupportedTextureFormats. - Enumerate swapchain textures with

varjo_GetSwapChainImageand create render targets. - Create frame info for per-frame data with

varjo_CreateFrameInfo.

- Initialize the Varjo system with

- Rendering (in the render thread)

- Call

varjo_WaitSyncto wait for the optimal time to start rendering. This will fill in thevarjo_FrameInfostructure with the latest pose data. Whilevarjo_FrameInfocontains the projection matrix, the recommended way is to build the projection matrix from tangents withvarjo_GetProjectionMatrix(). - Begin rendering the frame by calling

varjo_BeginFrameWithLayers. - For each viewport:

- Acquire swapchain with

varjo_AcquireSwapChainImage(). - Render your frame into the selected swap chain into texture index as given by the previous step.

- Release swapchain with

varjo_ReleaseSwapChainImage().

- Acquire swapchain with

- Submit textures with

varjo_EndFrameWithLayers. This tells Varjo Runtime that it can now draw the submitted frame.

- Call

- Shutdown

- Free the allocated

varjo_FrameInfostructure. - Shut down the session by calling

varjo_SessionShutDown().

- Free the allocated

Simple example code for these steps with additional descriptions of the functions is shown below. The examples assume that there exists a Renderer class which abstracts the actual frame rendering.

Note: In Vulkan there are 3 additional functions that need to be called -

varjo_GetInstanceExtensionsVk,varjo_GetDeviceExtensionsVkandvarjo_GetPhysicalDeviceVk.

Initialization

The following example shows basic initialization. Use varjo_To<D3D11|D3D12|GL|Vk>Texture to convert varjo_Texture to native types.

varjo_Session* session = varjo_SessionInit();

// Initialize rendering engine with a given luid

// To make sure that compositor and application run on the same GPU

varjo_Luid luid = varjo_D3D11GetLuid(session);

Renderer renderer{luid};

std::vector<varjo_Viewport> viewports = CalculateViewports(session);

const int viewCount = varjo_GetViewCount(session);

varjo_SwapChain* swapchain{nullptr};

varjo_SwapChainConfig2 config{};

config.numberOfTextures = 3;

config.textureHeight = GetTotalHeight(viewports);

config.textureWidth = GetTotalWidth(viewports);

config.textureFormat = varjo_TextureFormat_R8G8B8A8_SRGB; // Supported by OpenGL and DirectX

config.textureArraySize = 1;

swapchain = varjo_D3D11CreateSwapChain(session, &config);

std::vector<Renderer::Target> renderTargets(config.numberOfTextures);

for (int swapChainIndex = 0; swapChainIndex < config.numberOfTextures; swapChainIndex++) {

varjo_Texture swapChainTexture = varjo_GetSwapChainImage(swapchain, swapChainIndex);

// Create as many render targets as there are textures in a swapchain

randerTargets[i] = renderer.initLayerRenderTarget(swapChainIndex, varjo_ToD3D11Texture(swapChainTexture), config.textureWidth, config.textureHeight);

}

varjo_FrameInfo* frameInfo = varjo_CreateFrameInfo(session);

Frame setup

In this example we will submit one layer. We are preallocating vector with as many views as runtime support.

std::vector<varjo_LayerMultiProjView> views(viewCount);

for (int i = 0; i < viewCount; i++) {

const varjo_Viewport& viewport = viewports[i];

views[i].viewport = varjo_SwapChainViewport{swapchain, viewport.x, viewport.y, viewport.width, viewport.height, 0, 0};

}

varjo_LayerMultiProj projLayer{{varjo_LayerMultiProjType, 0}, 0, viewCount, views.data()};

varjo_LayerHeader* layers[] = {&projLayer.header};

varjo_SubmitInfoLayers submitInfoWithLayers{frameInfo->frameNumber, 0, 1, layers};

Rendering loop

The render loop starts with a varjo_WaitSync() function call. This function will block until the optimal moment for the application to start rendering.

varjo_WaitSync() returns varjo_FrameInfo. This data structure contains all important information related to current frame and pose. varjo_FrameInfo has three fields:

views: Array ofvarjo_ViewInfo, as many as the count returned byvarjo_GetViewCount(). Views are in the same order as when enumerated withvarjo_GetViewDescription.displayTime: Predicted time when the rendered frame will be shown in HMD in nanoseconds.frameNumber: Number of the current frame. Monotonously increasing.

For each view, varjo_ViewInfo contains the view and projection matrixes, and whether the view should be rendered or not. Please note that the recommended way is to build the projection matrix from tangents with varjo_GetProjectionMatrix().

viewMatrix: World-to-eye matrix (column major)-

projectionMatrix: Projection matrix (column major). Applications need to patch the depth transform for the matrix usingvarjo_UpdateNearFarPlanes. Applications should not assume that this matrix is constant.while (!quitRequested) { // Waits for the best moment to start rendering next frame // (can be executed in another thread) varjo_WaitSync(session, frameInfo); // Indicates to the compositor that application is about to // start real rendering work for the frame (has to be executed in the render thread) varjo_BeginFrameWithLayers(session); int swapChainIndex; // Locks swap chain image and gets the current texture index // within the swap chain the application should render to varjo_AcquireSwapChainImage(swapchain, &swapChainIndex); renderTargets[swapChainIndex].clear(); float time = (frameInfo->displayTime - startTime) / 1000000000.0f; for (int i = 0; i < viewCount; i++) { varjo_Viewport viewport = viewports[i]; // Update depth transform to match the desired one varjo_FovTangents tangents = varjo_GetFovTangents(session, i); varjo_Matrix projectionMatrix = varjo_GetProjectionMatrix(&tangents); varjo_UpdateNearFarPlanes(projectionMatrix, varjo_ClipRangeZeroToOne, 0.01, 300); renderTargets[i].renderView(viewport.x, viewport.y, viewport.width, viewport.height, projectionMatrix, frameInfo->views[i].viewMatrix, time); } // Unlocks swapchain image varjo_ReleaseSwapChainImage(swapchain); }

Frame submission

varjo_SubmitInfoLayers describes the list of layers which will be submitted to compositor.

frameNumber: Fill with the frame number given byvarjo_WaitSync.layerCount: How many layers are in the layer array.-

layers: Pointer to the array of layers to be submitted.... // Copy view and projection matrixes for (int i = 0; i < viewCount; i++) { const varjo_ViewInfo viewInfo = frameInfo->views[i]; std::copy(projectionMatrix, projectionMatrix + 16, views[i].projection.value); std::copy(viewInfo.viewMatrix, viewInfo.viewMatrix + 16, views[i].view.value); } submitInfoWithLayers.frameNumber = frameInfo->frameNumber; varjo_EndFrameWithLayers(session, &submitInfoWithLayers);

Events

Varjo API uses events to notify users about changes to the system and user input. All events are listed in varjo_Events.h. You can listen for events for example in the following way:

varjo_Event evt{};

while (varjo_PollEvent(session, &evt)) {

switch (evt.header.type) {

case varjo_EventType_Button: {

if (evt.data.button.buttonId == varjo_ButtonId_Application && evt.data.button.pressed) {

// Do something when the HMD button is pressed

}

} break;

case varjo_EventType_TextureSizeChange: {

// Desired texture size has changed, recreate the swapchains

// New texture sizes can be obtained with varjo_GetTextureSize

renderer->recreateSwapchains();

} break;

}

}

If the application does not poll all the events, the events will eventually get lost.

Shutdown

The following functions should be used to uninitialize the allocated data structures.

varjo_FreeFrameInfo(frameInfo);

varjo_FreeSwapChain(swapchain);

varjo_SessionShutDown(session);